NIST provides critical tools to ensure AI systems are safe, reliable, and ethical. Two key resources - AI RMF and Dioptra - help organizations manage risks and test AI models effectively. Here’s a quick summary:

- AI RMF (AI Risk Management Framework): A structured process to identify, assess, and mitigate AI risks through four functions: Govern, Map, Measure, and Manage.

- Dioptra: An open-source platform for testing AI models, focusing on security (resilience to attacks) and performance (accuracy and efficiency).

- Key Goals:

- Reduce bias in AI models

- Test robustness against threats

- Validate performance in diverse scenarios

- Ensure compliance with safety standards

NIST’s frameworks also align with global standards like ISO/IEC and the EU AI Act, making them essential for organizations aiming to develop secure and transparent AI systems.

| Tool | Purpose | Focus Areas |

|---|---|---|

| AI RMF | Risk management framework | Governance, risk assessment |

| Dioptra | AI testing platform | Security, performance testing |

Certified NIST AI RMF 1.0 Architect Training: Introduction, Part ...

NIST AI Risk Management Framework (AI RMF)

The NIST AI Risk Management Framework (AI RMF) helps organizations evaluate and address risks associated with AI systems. It offers a structured way to identify, assess, and handle potential weaknesses in AI technologies.

Main Elements of AI RMF

The framework is built around four key functions designed to manage risks effectively:

- Govern: Focuses on creating oversight processes and policies to handle AI risks.

- Map: Involves documenting the context and identifying risks within AI systems.

- Measure: Uses defined metrics to evaluate and quantify risks.

- Manage: Implements controls and monitoring systems to mitigate and address risks.

These functions work together in a continuous cycle, enabling organizations to adapt their risk management practices as AI systems evolve.

| Function | Key Actions | Expected Outcomes |

|---|---|---|

| Govern | Develop policies; assign roles | Clear accountability and governance |

| Map | Analyze context; identify risks | Detailed understanding of potential risks |

| Measure | Assess risks; evaluate impacts | Data-driven insights into risk levels |

| Manage | Apply controls; monitor systems | Proactive risk reduction and oversight |

Applying AI RMF in Organizations

To integrate AI RMF effectively, start by evaluating your current AI systems and risk management practices. Form cross-disciplinary teams to ensure diverse expertise, and test the framework on high-priority systems before expanding its use throughout the organization.

Challenges and Considerations

Some common hurdles include inadequate resources, gaps in technical knowledge, and difficulties aligning the framework with existing workflows. Addressing these issues early can improve the framework's implementation and effectiveness.

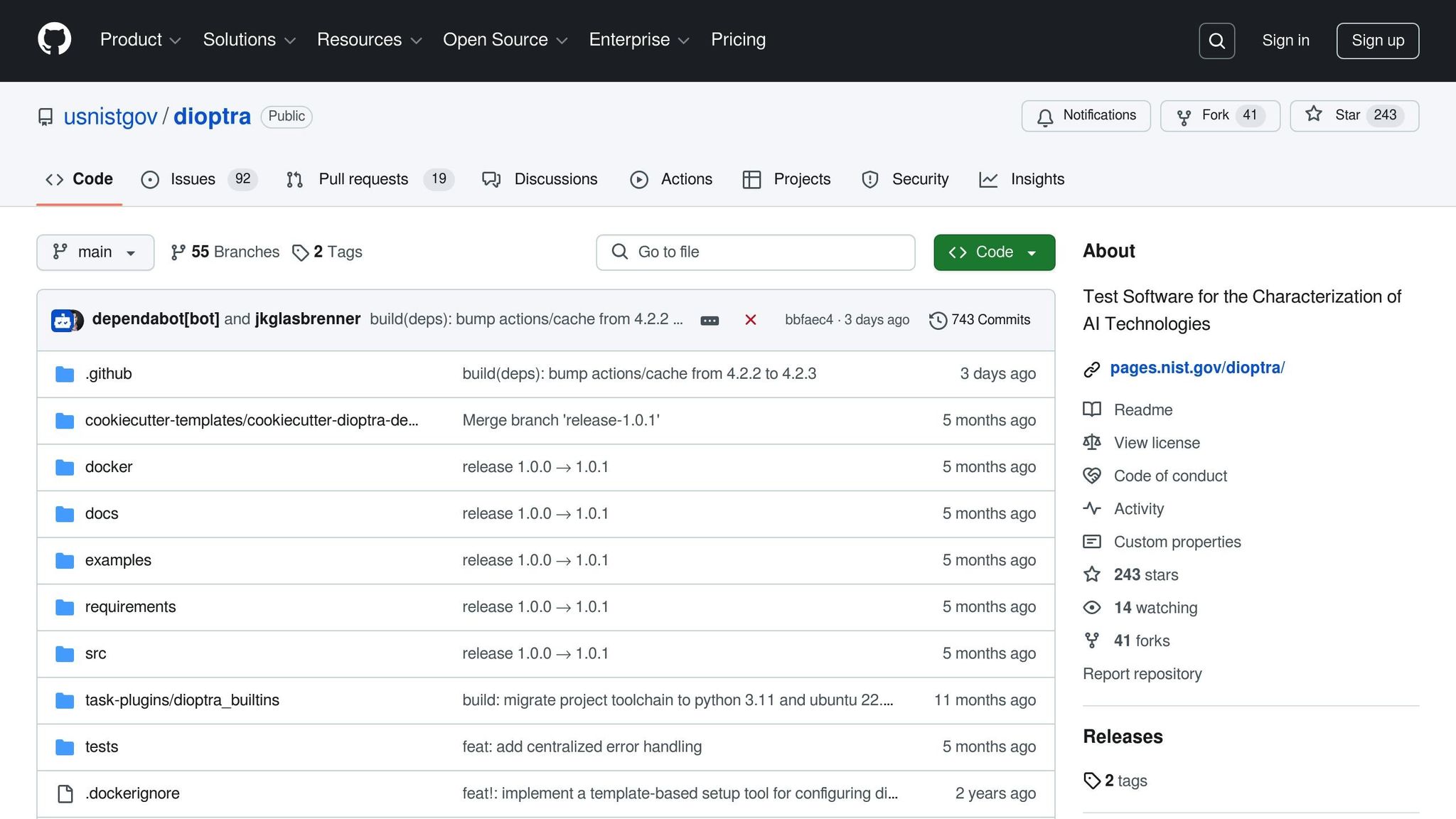

Dioptra: NIST's AI Testing Software

Dioptra is an open-source tool from NIST designed to evaluate the security and performance of AI models. It allows developers and organizations to run automated tests, ensuring their AI systems meet necessary standards.

Key Features

Dioptra focuses on two main areas:

- Security Assessment: Tests a model's ability to withstand attacks and identifies potential vulnerabilities.

- Performance Testing: Measures how well a model operates, including accuracy and efficiency.

Here's a quick breakdown:

| Testing Category | Focus Area | Purpose |

|---|---|---|

| Security | Resilience and vulnerability | Spot weaknesses and improve defenses |

| Performance | Accuracy and efficiency | Ensure smooth operation under set conditions |

How to Use Dioptra

Follow these steps to test your AI models with Dioptra:

-

Set Up the Environment

Install Dioptra using the recommended package manager and configure it based on the official guidelines. -

Configure Your Tests

Define the parameters for your tests, including input data, expected outcomes, performance benchmarks, and security scenarios. -

Run Tests and Review Results

Execute the automated tests and use Dioptra's reporting tools to analyze the findings. These reports help pinpoint security flaws and performance issues.

The platform's documentation includes templates and examples for common use cases, making it easier for teams to adopt structured testing workflows. Pair Dioptra's insights with NIST's safety standards to ensure a thorough evaluation of AI-related risks.

sbb-itb-f88cb20

NIST AI Safety Requirements

The National Institute of Standards and Technology (NIST) has outlined guidelines for developing and deploying artificial intelligence systems. These guidelines aim to ensure AI systems are reliable, secure, and ethically designed.

Official Documents

NIST provides several important documents that detail AI safety requirements and best practices:

| Document Type | Purpose | Key Focus Areas |

|---|---|---|

| AI Risk Management Framework | Core safety principles | Risk assessment, mitigation strategies |

| Special Publications (SP) | Technical guidelines | Implementation standards, testing protocols |

| Interagency Reports (IR) | Research findings | Emerging threats, safety developments |

These documents form the backbone of NIST's AI safety protocols. For instance, the AI Risk Management Framework (AI RMF) 1.0, released on January 26, 2023, serves as a cornerstone for these safety efforts.

Connection to Global Standards

NIST's guidelines are designed to align with international frameworks while addressing the specific needs of U.S. organizations. The requirements integrate with:

- ISO/IEC Standards: Ensuring compatibility with global standards like ISO/IEC 27001 for information security management.

- European Union AI Act: Addressing cross-border deployment considerations.

- IEEE Ethics Guidelines: Embedding ethical principles into AI development.

To meet both domestic and global compliance requirements, organizations should focus on three main areas:

1. Documentation and Transparency

Maintain detailed records of AI development, testing, and risk assessments. This creates a clear audit trail for compliance verification.

2. Continuous Monitoring

Regularly evaluate AI systems to ensure they meet safety benchmarks. Key activities include:

- Tracking performance metrics

- Scanning for security vulnerabilities

- Identifying and addressing bias

- Updating impact assessments

3. Stakeholder Engagement

Involve all relevant parties - technical teams, management, users, and communities - in overseeing AI systems. This helps ensure diverse perspectives are considered.

As technology evolves, these requirements are designed to be flexible while prioritizing safety. Organizations should stay updated with NIST's latest guidance and adjust their compliance strategies accordingly.

NIST's Next Steps in AI Safety

NIST continues its work to strengthen AI safety by advancing research and refining its frameworks, such as AI RMF and Dioptra.

The organization is focused on improving testing methods, uncovering biases, and creating standardized safety metrics. These efforts aim to turn theoretical progress into practical standards that the AI community can adopt. This work builds on the foundations outlined in earlier tools and frameworks.

For updates on research, publications, and events, stakeholders are encouraged to check the NIST website regularly to stay informed with the most recent developments and recommendations.

Summary

NIST's AI tools, AI RMF and Dioptra, combine risk management strategies with hands-on testing to help secure AI systems. The AI RMF provides a framework for identifying and addressing risks, while Dioptra allows developers to thoroughly test AI systems. These tools turn general safety concepts into practical evaluations, helping to safeguard AI as it continues to advance.